Parameters to know for Best AI Art in Stable Diffusion

So, you have produced a number of Stable Diffusion AI pictures. They appeared fantastic but weren’t quite what you wanted? You should customise a little. Here is an overview of the fundamental generational parameters that one can follow!

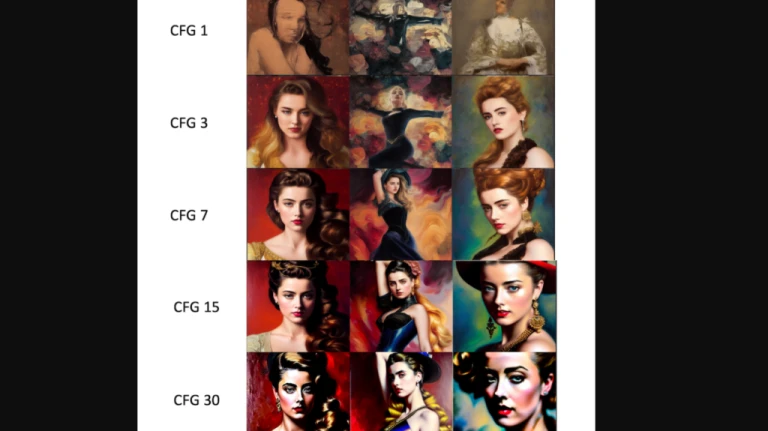

CFG Scale for Stable Diffusion AI

A parameter for determining how much the model should adhere to your instruction is called the Classifier Free Guidance scale.

1 – Mostly ignore your prompt.

3 – Be more creative.

7 – A good balance between following the prompt and freedom.

15 – Adhere more to prompt.

30 – Strictly follow the prompt.

Using the same random seed, the following examples show how to increase the CFG scale. Generally speaking, you ought to steer clear of the two extremes, 1 and 30.

CFG Scale for Stable Diffusion AI

CFG Scale for Stable Diffusion AISampling Steps

As the sampling step is increased, quality increases. Euler sampler typically requires 20 steps to produce a picture of good quality and sharpness. When moving through higher values, the image will still softly shift; it will change but not necessarily become of greater quality. In case you think the quality is poor, adjust to higher.

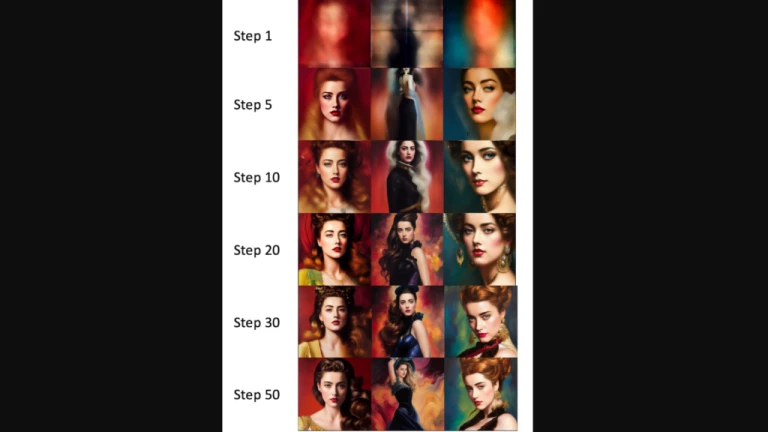

Increase in Sampling Steps

Increase in Sampling StepsSampling Methods

Depending on the GUI you are using, you can choose from a variety of sampling techniques. Simply put, there are several strategies for resolving diffusion equations. Although they should produce the same outcome, due to numerical bias, they may do so slightly differently. However, since there is no correct response in this situation and the sole criterion is whether the image looks decent, you shouldn’t worry about the method’s correctness.

Online communities have arguments about how certain sampling techniques tend to produce particular styles. Theoretically, this has no merit.

Seed in Stable Diffusion AI

The final image and the initialization noise pattern are both determined by the random seed.

If you set it to -1, it will always be chosen at random. When you want to create fresh photographs, it is helpful. On the other hand, if it were fixed, every new generation would see the same visuals.

If you use a random seed, how can you determine the seed used for an image? What you should see in the dialogue box is something like:

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 4239744034, Size: 512×512, Model hash: 7460a6fa

Thus, copying this seed value into the seed input box is easy. If you create multiple images at once, the seed value for each successive image is this number multiplied by one.

Image Size

Setting Stable Diffusion AI to portrait or landscape sizes may result in unanticipated problems because the algorithm is trained with 512512 images. When it’s possible, keep it square. It is advised that the artist choose a 512×512 image size.

Batch Size

Creating a few photos at once is always a smart idea because the final images heavily depend on the random seed. This will help you understand the capabilities of the present prompt. The recommended batch size for the artist is 4 or 8.

Restoration of Faces in Stable Diffusion AI

Stable Diffusion frequently has problems with features and eyes, which is a dirty little secret of the theory. Restore faces is a post-processing technique that uses AI that has been specifically trained to restore faces on photos.

Check the box next to “Restore faces” to activate it. Select CodeFormer under Face restoration model on the Settings tab.

Here are two instances. On the left is the side without face restoration. On the right, there is a face restoration. When creating images with faces, it is advised that the artist turn on restore faces.

Left: Original Image, Right: Image after Face Restoration

Left: Original Image, Right: Image after Face RestorationFollow us on Instagram: @niftyzone