What is GLAZE? Protects artists from Style Mimicry?

Since the rise of AI-generated artwork, concerns have been raised that artists’ work is being copied and used without their permission. The Glaze program offers something of a solution.

What is Glaze?

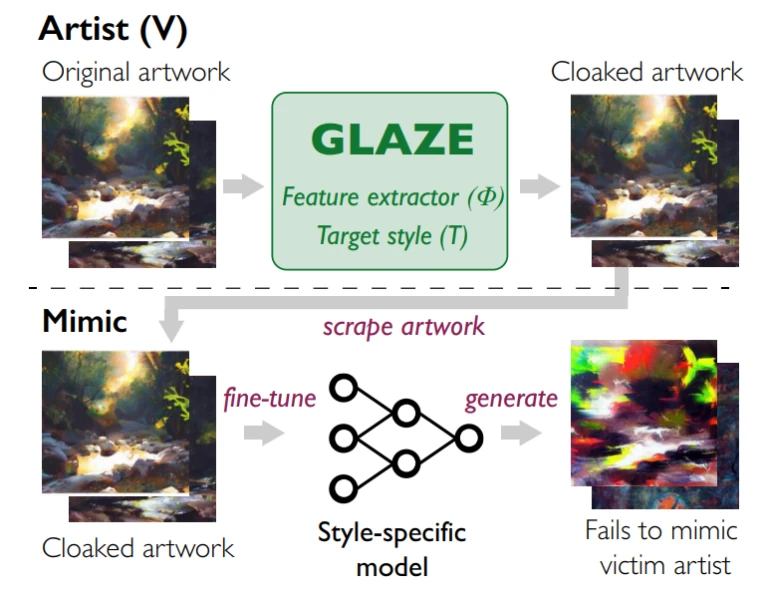

A “cloaking” layer designed to prevent AI-based imitations from succeeding. Researchers at the University of Chicago created Glaze. It confuses AI trained on your pictures into thinking it’s something completely different.

AI image generators like DALL-E, Stable Diffusion, and Midjourney create extraordinary images from simple text prompts. They’re fun, and like many AI tools, they’re improving and evolving rapidly.

As part of their training, they ingest more or less the entire history of popular art, up to a point where you can commission your own Picassos and Van Goghs.

How does Glaze work?

To interfere with AI models’ ability to read the data in artworks, The program makes subtle changes to artworks.

The new artist’s images are first recreated using a style-transfer AI in the style of famous past artists. For e.g Vincent Van Gogh.

In order to maximize the phony patterns that AIs might be able to detect, the style-transferred image is used as part of a computation that alters the original image toward the style-transferred image in a way that minimizes the visual impact of any changes.

Glaze model workflow

Glaze model workflowHowever, people are testing Glaze using Photoshop Denoising to remove the maximum noises added by the software. At the same time, try to retrieve the original image at the best possible quality.

Photoshop denoisingThis time I tested Glaze, an AI training preventing tool. #AI https://t.co/A0taT8xqyI pic.twitter.com/HM46o8gPfr

— toyxyz (@toyxyz3) March 17, 2023

Conclusion

Glaze’s team does not expect this technique to work for very long.

The team behind Glaze says this is not a permanent solution because “AI evolves quickly, and systems like Glaze face the challenge of being future-proof.

Today’s technique of cloaking artworks might not work for future advances happening in the AI space.

Follow us on Instagram, Facebook, and Twitter!

What’s new about Midjourney v5? check out here