Riffusion: Stable Diffusion for Real-Time Music Generation

You may be familiar with Stable Diffusion, the open-source AI model that can create visuals from text and has received a lot of attention. Seth Forsgren and Hayk Martiros have recently developed Riffusion as a “hobby project”. It uses a similar approach to convert text into music. AI-generated music is already a cutting-edge idea. However, Riffusion elevates it with a brilliant, peculiar method. It creates bizarre and intriguing music utilizing images of audio rather than actual audio.

How does Riffusion work?

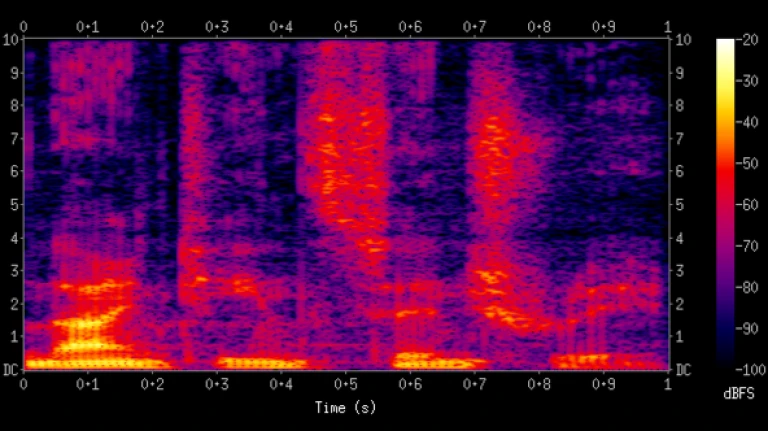

Spectrograms are used in Riffusion to create pictures, which are later transformed into audio clips. It is claimed that by changing the “seed,” it may produce an unlimited number of different text prompts.

The designers of Riffusion explain that the Short-time Fourier transform (STFT), which approximates audio as a mixture of sine waves with different amplitudes and phases, can be used to construct a spectrogram from audio.

Riffusion

RiffusionThe technique, which involves providing the primarily trained model with a lot of a specific material in order to have it specialize in producing more examples of that content, has proven effective in various domains and is particularly amenable to fine-tuning. For instance, you could fine-tune it to reproduce paintings or images of vehicles. It would also be more successful at doing so.

The web application lets you provide prompts and will continue to produce interpolated content in real-time as long as you allow it to. It also provides you with a 3D visual depiction of the spectrogram. If there isn’t a next prompt, Riffusion will interpolate between different seeds of the current prompt if you choose to skip along right away.

What are Spectrograms?

A spectrogram is a graphic representation of a signal’s frequency spectrum as it evolves over time. Spectrograms are sometimes referred to as sonographs, voiceprints, or voicegrams when they are applied to an audio input. Waterfall displays are what you might refer to when the data is displayed in a 3D plot.

Spectrogram

SpectrogramSpectrograms are widely employed in a variety of disciplines, including music, linguistics, sonar, radar, speech processing, and seismology. Audio spectrograms can be used to analyze animal cries and phonetically identify spoken phrases.

An optical spectrometer, a bank of band-pass filters, the Fourier transform, or a wavelet transform can all produce a spectrogram. In this case it is also known as a scaleogram or scalogram.

The Future of Riffusion

Riffusion is more of a “wow, look at this” demonstration than a huge scheme to change music, and Forsgren and Martiros said they were just pleased to see people interacting with, enjoying, and improving their work:

We might go in a lot of different directions from here, and we’re eager to keep learning as we go. It’s been interesting to see how other people have built their own concepts on top of our code already this morning. How quickly individuals build upon ideas in ways that the original writers can’t expect is one of the remarkable things about the Stable Diffusion community.

You can try it out in a real-time demonstration at Riffusion.com, but be prepared to wait a little while for your clip to display. This received a little more attention than the designers had anticipated. If you have the resources, you are welcome to operate your own as well. This is because the code is all accessible via the about page.

Riffusion is both impressive and disturbing in equal measure, even while we can’t pretend to grasp how it all works. Although this technology is still in its infancy, it is easy to envision how powerful it will eventually be.

Follow us on Instagram: @niftyzone