Beginner’s Guide to Stable Diffusion AI

This beginner’s manual is intended for people who have never used Stable Diffusion or any other AI picture generators before. The article covers beginner’s guide to Stable diffusion AI.

What is a Stable Diffusion AI Image?

An AI model called Stable Diffusion creates images from text input. For example, you may enter the prompt: “Generate photos of a gingerbread house.”

The AI model generates images that matches the prompt:

Stable Diffusion AI Image

Stable Diffusion AI ImageAdvantages of Stable Diffusion AI:

Open-source: Numerous hobbyists have also produced cost-free, potent instruments.

It’s made for PCs with little power: It is free and inexpensive to run.

How to Start Generating AI Images?

Online Generator

We advise using a free online generator for total beginners. Enter the example prompt from above into one of the websites on the list, and you’re good to go!

Advanced GUI

The capability of online generators is rather constrained, which is a drawback.

If you outgrew them, you should switch to a more sophisticated GUI (Graphical User Interface). We recommend AUTOMATIC1111 since it’s a strong and well-liked option.

If you have a strong GPU with 4GB VRAM or more, running on your PC is also a viable choice. See the Windows installation manual.

You can experiment with fine-tuning your photographs with cutting-edge methods like keyword blending and inpainting when using a sophisticated GUI.

How to Build Good Prompts?

Using a prompt generator is an excellent approach to discover essential words and the composition of a prompt. It’s crucial for newcomers to become familiar with a handful of effective keywords and their anticipated outcomes. This is comparable to picking up a new language’s vocabulary.

Reusing previously created prompts is a quick way to create images of good quality. The drawback is that you might not realize why those prompts result in excellent photographs. To see how things alter, read the notes and alter the prompt.

Additionally, use Lexica or other AI image database websites. Use the prompt and a favorite image. However, the drawback is that locating a good prompt is difficult—like it’s looking for a needle in a haystack. There is no curation of the collection and no mechanism to remove poor quality prompts.

Consider the prompt as a place to start!

Tips to Build Good Prompt

The key to specific and good AI images is to build good prompts. Follow these tips to build good prompts:

Be Detailed and Specific in Your Prompts

Stable Diffusion AI still can’t read your mind, even how far AI has come. To create good Stable Diffusion AI Images must provide as many specifics as you can about your image.

For instance, if you want an image created through Stable Diffusion AI of a woman sitting on a chair. There can be two prompts: a vague and a specific one.

Prompt 1: Vague Prompt; woman sitting on chair

Prompt 2: Specific Prompt; a young gorgeous woman with long blonde hair sitting on a 19th-century antique chair, beautiful eyes, symmetric face, dedicated modern clothing, photo

Left: Vague, Right: Specific

Left: Vague, Right: SpecificUse of Powerful Keywords

Simply said, some keywords have greater power than others. Examples of this

- A famous person (e.g. Emma Watson)

- Author’s name (e.g. Van Gogh)

Genre (e.g. illustration, painting, photograph)

You can carefully use them to direct the image in the desired direction.

Thus, in the basics of building prompt, you can get more information about prompt construction and sample keywords.

Important Parameters for a Stable Diffusion AI Image

The majority of online generators only let you alter a small number of settings. Here are a few significant examples:

Image size: The dimensions of the final image. The size is typically 512 by 512 pixels. The image may be significantly altered by changing the size to portrait or landscape. Use portrait size, for instance, to create a full-body image.

Use at least 20 sampling steps. If you notice a hazy image, increase.

Scale for CFG: A typical value is 7. If you want the image to adhere to the prompt more closely, increase.

A random image is produced using the seed value of 1. If you want the same image, provide a value.

Common Ways to Fix Defects in Images

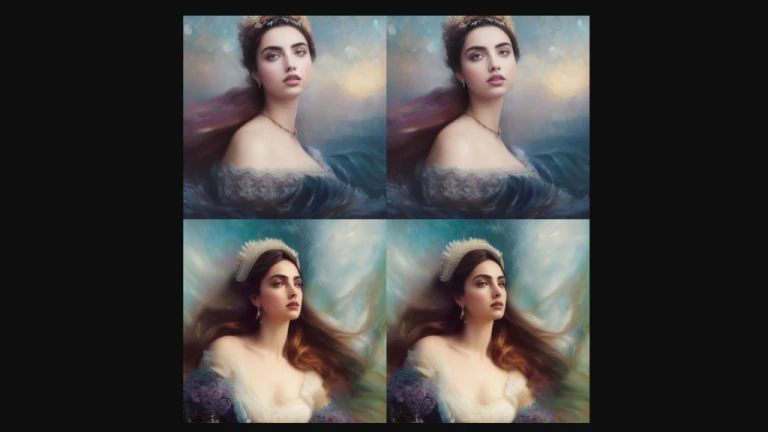

There’s a significant likelihood that the gorgeous AI images you see shared on social media have gone through a number of post-processing stages. Some of them will be covered in this section.

Face Restoration

The AI artist community is aware that Stable Diffusion struggles to produce realistic looks. Subsequently, the faces that are produced frequently contain artefacts.

We frequently employ face restoration-trained image AI models, such as CodeFormer, whose support is incorporated into the AUTOMATIC1111 GUI.

Face Restoration, Left: Original, Right: After Face Restoration

Face Restoration, Left: Original, Right: After Face RestorationInpainting

Getting the perfect shot in one go is difficult. Aiming to create an image with strong composition and then inpainting the imperfections is a superior strategy.

An example of an image before and after inpainting is shown below. 90% of the time, inpainting with the original prompt is effective. Make sure high resolution fix is activated.

Inpainting: Original image with defects. Right: The face and arm are fixed by inpainting.

Inpainting: Original image with defects. Right: The face and arm are fixed by inpainting.Custom Models for Stability Diffusion AI Images

Base models are the official models that Stability AI and their collaborators have released. Base models also include Stable Diffusion 1.4, 1.5, 2.0, and 2.1, to name a few.

These models are used to train custom models. Most models now were trained using v1.4 or v1.5. Therefore, with the help of new data, they are trained to produce images of certain designs or objects.

The only restriction on custom models is the sky. You name it: it might be done in an anime, Disney, or other AI style.

Additionally, combining two models to produce a style between any two models is simple. It is thus an excellent creative tool!

Follow us on Instagram: @niftyzone